AI — Flying over a changing landscape

Originally published on Medium

Introduction

We are living in a world of constant churn reiterating the fact that change is the only constant. I had often thought about explaining the change of AI on everyday use cases that are constant evolving and yet very little focus is given to them. The key driving force, Technology. Technology has reshaped how we solve problems — sometimes gradually, sometimes radically.

A Use Case Transformed: The Evolution of Technology from Rules to Reasoning

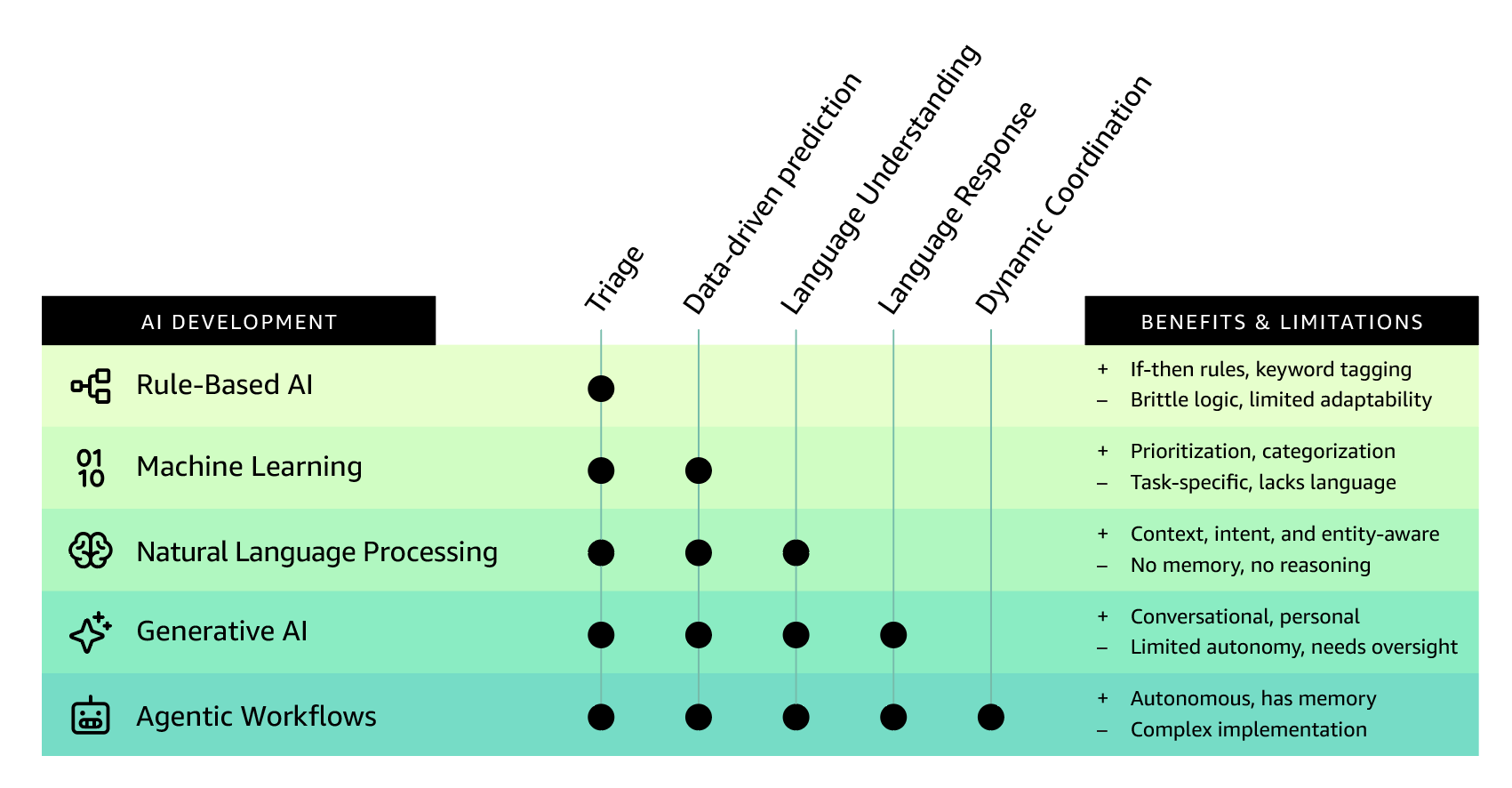

In this post, I take a simple but powerful use case — customer support — and trace its transformation across six key phases of technological evolution: from pre-AI processes to agentic workflows. This journey highlights not just technical progress, but a shift in mindset: from static tools to dynamic, autonomous problem-solving systems.

Before AI: Human-Centric Customer Support

In the early days, customer support was entirely manual and people-driven. A customer facing a billing issue would have to have multiple touch points before they could even arrive at a stage to explain the issue/problem they are facing. Some common examples are:

- Call or email a support center

- Wait for an available agent

- Get routed based on surface-level triage

- Have their issue resolved through predefined checklists or escalation

The challenges resulting from such a broken process always tend to take longer resolution times, high cost of human labor due resulting in ballooning capital expenditure, inconsistent outcomes based on agent experience and increased tribal knowledge with no learning shared from the past issues.

This model, though serviceable, couldn't scale with growing user bases or rising expectations.

The Era of AI: Rule-Based Automation

From the 1970s, the concept of expert systems was born. These systems predominantly used predefined "if-then" rules to emulate human decision making. By 1980s commercial adoption of these rule based systems took center stage with further acceleration in industries such as finance, healthcare and manufacturing. Top areas where the system performed well were Fraud detection, loan approvals, automated diagnostics, etc.

When these rule based automation reached mainstream business applications, they were often embedded in Business Process Management (BPM) and workflow engines. With the advent of call centers and back offices in early to mid 1990s, the solution was extensively implemented for tax preparation software development, insurance claims to determine eligibility and alert monitoring in IT systems. With a changing landscape, the rule based solution laid the foundation for Robotic Process Automation or RPA in 2000s and the first version of AI driven automation in early 2010s.

The first wave of AI introduced deterministic rule engines and basic decision trees. These systems:

- Automated keyword-based email tagging

- Routed tickets using if-then logic

- Offered simple chatbot experiences based on scripted paths

Several improvements were identified through this AI process which potential reduced human load on repetitive tasks and introduced basic automation and faster triaging.

Throughout the decades while several challenges were addressed, key limitations remained. The Rule Based Automation couldn't handle ambiguous or novel queries, reduced or no capacity to learn from feedback unless hard-coded and rigid rule maintenance which resulted in several bottlenecks.

To summarize the AI here was acting like a calculator — efficient, but not intelligent.

Machine Learning: Pattern Recognition and Prediction

Machine Learning (ML) changed the game by introducing pattern recognition at scale. With access to historical data, ML models could predict ticket categories (billing, tech, general inquiry), prioritize queries based on urgency or sentiment and recommend resolution paths based on similar past cases. Pattern recognition also helped the field of data science to be introduced in mainstream business decision making by helping mine data that could result in potential decision making that higher success rates.

Some key improvements were noted but not limited to data-driven triage and resolution, personalization based on historical interactions and reduced manual intervention in classification.

Though the improvements were large and mostly adopted by several organizations, limitations were identified around explainability, models struggling with unstructured and conversational inputs and narrow focus on task-specific reactions and remaining solely reactive.

Though ML improved efficiency it didn't yet understand the "why" behind customer queries.

NLP: Understanding Language and Intent

During mid 2000s, with the rise of Machine Learning, Deep learning, Large Language Models (LLMs — Ex: GPT, BERT) — Natural Language Processing (NLP) brought in the ability to understand text at a deeper level. With NLP, systems could now identify user intent and key entities, understand context within sentences, offer multi-lingual support and real-time translation and various other benefits.

NLP brought several improvements such as more human-like interactions, better intent detection for chatbots, and reduced misclassification of customer issues. Several businesses continue to adopt NLP for their business needs even today.

However, limitations still existed. Predefined conversation flows were still static, responses were often templated and not dynamic, the solutions/responses continued to lack reasoning and memory.

In summary, NLP made the systems better listeners — but not yet thinkers.

Generative AI: Dynamic and Contextual Conversations

In 2014, Generative Adversarial Networks (GANs) were introduced by Ian Goodfellow. The GAN consisted of two neural networks (generator + discriminator) that enabled the create of realistic images, music and more. This was the first major leap in visual content generation and that in turn led to the rise of transformers in 2018. The Transformer architecture introduced by Google through its white paper — "Attention is All You Need" is the basis for all modern Generative AI language models. This led Google to release BERT (Bi-directional Encoder Representations from Transformers). The core purpose of BERT was focused on understanding rather than generation. This revolutionized the way NLP would be consumed in the future.

By 2018, there was an explosion of Generative Language Models starting with OpenAI releasing GPT (Generative Pre-trained Transformer). With subsequent releases, OpenAI in 2022–23 released ChatGPT bringing LLMs to mainstream users. This was followed by the release of powerful generative AI open-source models like LLaMA by Meta, Falcon and Mistral by Mistral AI.

This major leap led Gen AI systems to engage in full-fledged conversations with nuanced, creative responses, Summarize customer history and offer tailored suggestions and draft custom emails, policy clarifications, or knowledge base articles in real time.

Generative AI also brought several improvements such as Hyper-personalized and scalable support, Context-aware, multi-turn conversations and enhanced user satisfaction through human-like engagement.

With this massive leap forward through Generative AI, the challenges considerably were addressed. However several advanced users highlighted challenges around — the solutions being stateless unless augmented with memory, requiring human oversight for sensitive cases and lack of end to end task execution capabilities.

In short, Generative AI made support intelligent, but still bounded by task limitations.

Agentic Workflows: Autonomous, Multi-Agent Collaboration

The latest evolution in this ever changing landscape is Agentic AI. This step combines memory, planning and tool use to make the models multi-modal (text, image, audio, video) where intelligent agents work together to complete complex goals with minimal human input. Under agentic workflows, foundational models power autonomous agents, digital humans and creative co-pilots in design, coding and science.

Taking the initial example — Customer Support, lets look at Agentic Workflow in action:

A customer query now triggers an orchestrated workflow:

- Agent 1: Extracts the issue from the conversation

- Agent 2: Pulls relevant customer history and billing data

- Agent 3: Applies company policy to assess resolution options

- Agent 4 (Coordinator): Oversees execution, ensures completion, and communicates with the customer

This end to end orchestrated workflow brings several benefits:

- End-to-end resolution with minimal or no human input

- Persistent memory across interactions

- Goal-driven autonomy, not just task automation

- Scales effortlessly with organizational complexity

As you see here, this is not just automation, it's intelligent collaboration among specialized AI agents.

To conclude, the journey from static rules to dynamic reasoning shows how a single use case can be completely reimagined through technology.

Conclusion

We're no longer asking, "Can AI help us with this task?". We're now asking, "What goals can AI autonomously achieve for us?"

Each phase didn't just improve speed or cost — it redefined what's possible.

Want to know what the next phase of AI journey is? Reach out to me and I will be happy to chat about it!

This blog was written by Ganesh Raam Ramadurai and images designed by Kosal.

Written by Ganesh Raam

I lead two lives. On weekdays, I'm a machine learning engineer obsessed with AI, cloud innovation, and sharing what I learn. On weekends, I'm a landscape photographer chasing all 60+ U.S. national parks. Pick your pill—tech or trails—and I'll show you how deep the rabbit hole goes.

You Might Also Like

Revolutionizing Retail & CPG Industries with IDP: The Future of Automated Workflows

Explore how Intelligent Document Processing (IDP), Generative AI, and Agentic Workflows are transforming document-heavy processes in Retail and Consumer Packaged Goods industries.

Fast-track SOP Processing using Amazon Bedrock

Learn how to accelerate Standard Operating Procedure (SOP) processing using Amazon Bedrock and generative AI capabilities for healthcare and life sciences.

Generative AI Prototype Transforms Life Sciences and Genome Analysis

Discover how a generative AI prototype with Amazon Bedrock revolutionizes genome analysis processes in life sciences, enabling faster insights and improved research outcomes.

Welcome to My Unified Website

Introducing my new unified website that brings together all my content from Medium, WordPress, and personal posts in one place with powerful features for an enhanced reading experience.

Comments

💬 How to comment: Sign in with your GitHub account to join the discussion. Your comments are public and will appear in our GitHub Discussions.

🔒 Privacy: We use GitHub's secure OAuth for authentication. No passwords are stored on this site.